7 Questions With Shazeda Ahmed: on China, AI Safety Ideologies, and the Politics of AI

Artificial intelligence is often described in sweeping, well-worn terms: a technological revolution, a geopolitical race, even an existential threat to humanity. But according to Dr. Shazeda Ahmed, the narratives surrounding AI are themselves powerful political artifacts. Their familiarity is part of their power. They help determine how governments fund research, how journalists frame emerging technologies, and how policymakers imagine the future.

Ahmed’s work asks: Who gets to define what AI is, and what it is for?

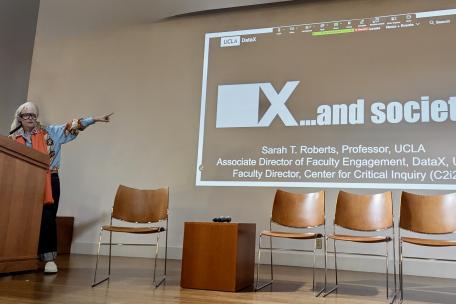

Trained in information studies at U.C. Berkeley and fluent in Mandarin, Ahmed investigates how ideologies about AI permeate institutional structures—from Silicon Valley, niche research communities, and government agencies to global media and China’s technology sector. Previously a UC Presidential/Chancellor’s Postdoctoral Researcher and now a UCLA DataX Postdoctoral Scholar, Ahmed represents a new generation of interdisciplinary scholars working at the intersection of AI research, science and technology studies, policy, and society, interrogating how power, politics, and history influence the technologies we build.

As universities, governments, and civil society confront and navigate the societal effects of AI, Ahmed’s work reflects a growing recognition central to the mission of DataX and the field of data justice: that AI is as much about power and public accountability as it is about technology.

A careful ethnographer and captivating storyteller, she draws on collaborations with scholars across law, anthropology, computer science, and cognitive science while engaging journalists, governance organizations including the EU Commission and the U.S. Department of State, and international human rights groups including Europe’s ARTICLE 19 and Access Now.

In this conversation, Ahmed reflects on the misconceptions surrounding AI narratives, China’s technology ecosystem, and the urgent need for interdisciplinary scholarship.

1. Your work crosses so many fields: science and technology studies, China studies, policy, and global studies, among others. How did you come to this interdisciplinary approach, and whose ideas have most informed your thinking?

For me now, it’s Quinn Slobodian and Lucy Suchman; I’m trying to make my work a mix of the two. I’m so inspired by my UCLA mentors Julia Powles, Safiya Noble, and Chris Kelty. Slobodian is a historian of global intellectual history who has recently written about how contentious ideas connecting IQ, eugenics, money, and borders persist through networks and intellectual communities that no longer operate in the shadows. Suchman is an anthropologist of technology whose work is incredible at dismantling the core assumptions behind technologies, and her recent research targets fallacies about applying AI in war. She talks about the thingness of AI—that it’s really a marketing term that helped raise funding and amass unwarranted, disproportionate power for its creators.

Anytime I spend too much time around people who don’t question what the word AI means, her work is a mind cleanser. She once reformulated a question I asked her in a way that really stuck with me: Why did the cognitivist approach to AI endure? As in, why is the comparison of how AI systems produce their outputs constantly compared with human cognition when there are still large gaps in understanding either of these things? A lot of my work now is trying to answer that question politically—looking at where those ideas appeal to people and what they try to build on that foundation.

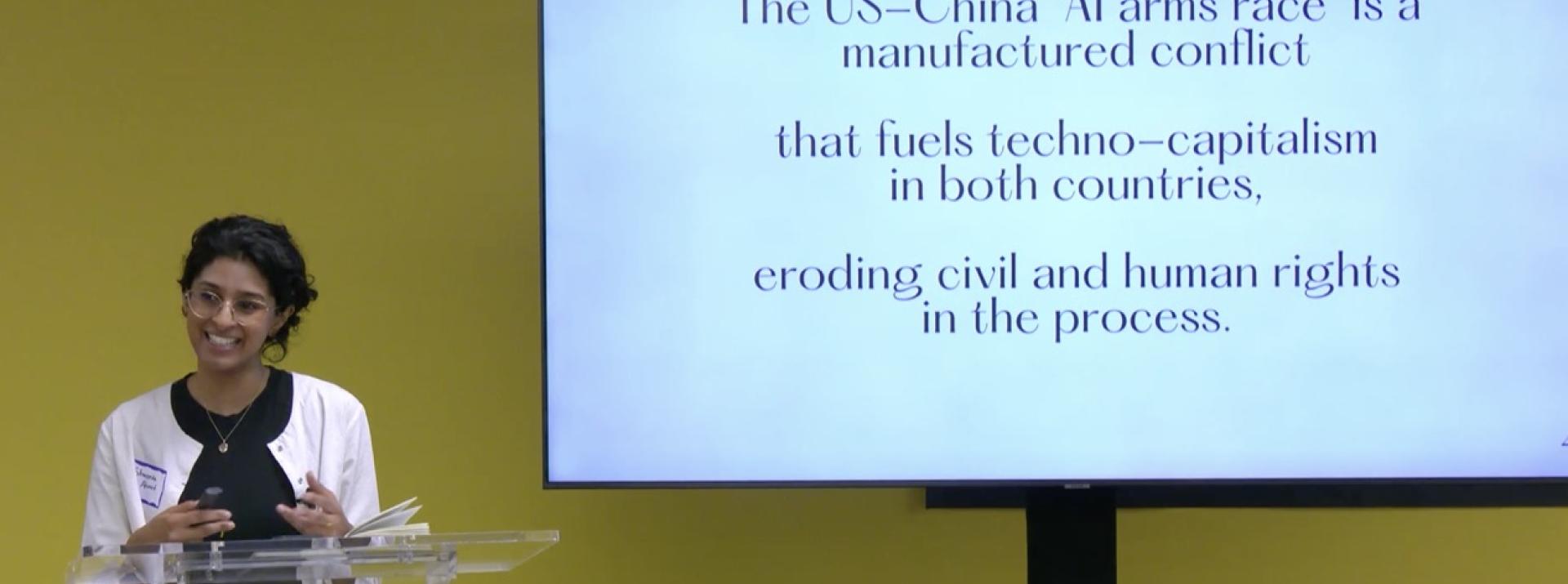

2. Much of the current debate frames AI development as a geopolitical race between the United States and China. Where did this narrative come from?

Over the last 10 or so years, there’s been a narrative coming out of Silicon Valley and the U.S. government that the U.S. and China are locked in an AI arms race or an AI Cold War. They’re pulling exactly that metaphor and making it seem like there is a finish line–like one country will get to be the dominant AI superpower that provides most of the world's access to AI systems and thereby shape global values. I’ve had to look back at where that idea came from.

There’s an earlier iteration with Japan in the 1980s when Japan wanted to build out a global AI development program to augment a shrinking workforce and provide elder care to its aging population. People in the U.S. military and at Stanford spun that as Japan trying to build a militarized AI system to take over the world, even though there wasn’t really much evidence that that was happening. That got used to raise a bunch of AI funding for the Defense Advanced Research Projects Agency (DARPA). And then the AI winter (a downturn in AI development and funding often due to overhype) came shortly thereafter, followed by decades of historical amnesia until the rehashing of this zero-sum competition dynamic all over again with China.

3. Your research looks closely at China’s technology ecosystem, and your Ph.D. research examined China’s social credit system, which is often portrayed in the West as a dystopian surveillance regime. What did you find? What do Western audiences often misunderstand?

A lot of the suppositions made in the U.S. about social credit and Chinese tech in general tend to not be accurate. When I go to China, I try to ask people if they get the sense here that they're in an arms race with the United States. Often people say no, that's not what is shaping the discourse.

There are all kinds of domestic issues around the labor force with tech in China. They're dealing with their own crises similar to ours, with graduates struggling to find jobs, and how to regulate AI (which they’ve made more inroads into than the U.S. has). So, the framing that dominates Western conversations often doesn’t reflect what people in China actually see as the key issues.

My dissertation work was on the social credit system, which is still misunderstood in the West. It's a system where the Chinese government wants people to comply with administrative law because there have been tons of people who don't pay their taxes or commit everyday violations. They developed a blacklisting system, where if you commit these infractions and don’t show up to your court date, a judge can escalate it and put you on a list that is shared in the media, sometimes posted in public spaces, and—most relevant to my work—is given to tech companies to place restrictions on their users who appear on state blacklists.

That information gets shared with airlines or high-speed rail systems. A lot of what people got wrong in the United States is they thought everyone in China had a morality score and that there was a sliding door between the government and tech companies handing data back and forth. Through my interviews in China, I saw how those relationships are negotiated and often constrained. Local governments at the city level feared over-stepping their authority if they “double-punished” blacklisted citizens and were wary of using tech company data they could not verify. Tech companies wanted to preempt impending legislation by designing their own solutions before the state could decide those for them. Though of course that was years ago, and the ambit Chinese tech companies once had to self-regulate has since narrowed.

4. Your work also examines the global rise of the AI safety movement. Why has that become such an important area of study?

I came to this research subject from following how the U.S.-China AI arms race narrative was becoming a U.S.-China AGI arms race narrative. AGI refers to artificial general intelligence, which is a highly contested concept suggesting that there will one day be AI systems that outperform humans at most tasks. Some people think building AGI is essential to solving all social problems and unlocking utopia. Others think that’s a pseudoscientific fiction. But that first view is becoming increasingly popular. It’s receiving far more funding than work on mitigating the everyday social harms that we already know AI causes. I wanted to understand why that was happening and what motivated the communities behind it.

5. You’ve written about how certain ideas about AI safety move from niche research communities into media coverage and public policy. How does that happen?

The media increasingly platforms people who come from that perspective. There’s philanthropic funding used to place journalist fellows in major newsrooms. Those journalists sometimes already come in with AI safety-informed ideological priors about AI. So this raises concern about if they’re laundering that ideology through major media.

If you look at TIME Magazine’s 100 Most Influential People in AI, something like a fifth of the people on the list in recent years come from the AI safety field. If you’re not an expert your first impression may be that these people’s views represent the consensus in the field. But as my colleague Klaudia Jaźwińska increasingly believes, based on our recent research, that’s a misrepresentation and a distortion. My work examines how a pretty niche set of ideas have suddenly left the margins and taken up policymakers’ imaginations.

6. If policymakers and universities want to govern AI responsibly–if that’s even possible–what voices should they be listening to?

There is a whole body of experts who study the social aspects of AI. They should be turned to equally, if not first, over technical experts. I say that because I have seen a lot of harm arise when technical experts are treated as though they can automatically speak to the social implications of AI that they themselves have not studied.

7. How do you translate this research in the classroom?

This Spring Quarter, I’m teaching an undergraduate Data, Justice, and Society cluster course on Critical AI Studies in Global Perspective. We’ll be looking at the cultural forces that shape AI systems and how people respond to them around the world, which I hope will unseat universalizing assumptions about tech that are prevalent in the U.S. I am curious about what feels possible in technocultures outside of this country that doesn’t feel possible here, and I look forward to exploring other geographies’ experiences with AI and labor, the environment, language, and more with my students.

One course I would love to teach in the future is on critical AI studies through the lens of wildfire technology. If fire is the object of study, it opens up a whole emerging field around building technology to prevent fires, fight fires, and manage the aftermath, including things like insurance calculations and recovery planning.

That space is ripe for questioning techno-solutionism. You can look at everything from Indigenous land management practices to debates about whether AI contributes to climate change or whether it might help address it. California even has an Office of Wildfire Technology Research and Development, so it’s a very concrete way to examine how these technologies are being built and deployed.

What I want students to understand is that AI is always situated in the real world. The questions it raises aren’t just technical questions—they’re social judgment questions about policy, governance, and human expertise. Using wildfire technology as a case study makes those issues immediate and accessible, especially here in California.

More broadly, it helps students see how AI operates in real institutions and real jobs—not just speculative futures, but also the systems and decisions that already shape their world.