Stacks Xchange: Field Notes on AI Across Disciplines

April 7, 2026

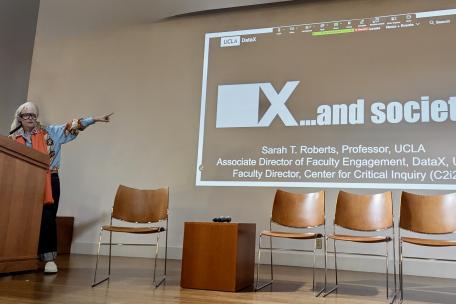

Stacks Xchange is DataX’s graduate-student, multi-part series on collecting and using data in creative ways across disciplines. It was conceived for experienced researchers, scholars who are just dipping their toes into the AI deep waters, or those actively seeking AI answers for their work.

“It’s important to me that graduate students have an interdisciplinary space and a semiformal venue to discuss the technologies they use to advance their research and teaching,” says Chris Johanson, DataX Faculty Director of Innovative Applications and Creative Activity, and Associate Professor, UCLA Classics and Digital Humanities.

Knowledge Graphs and Cultural Heritage

The first event featured a presentation by Chongwen Liu, Application of Knowledge Graph-Driven AI in Cultural Heritage Conservation. He is a PhD Candidate in Conservation of Material Culture, UCLA/Getty Interdepartmental Program (IDP) and a co-organizer of the series.

Cultural heritage conservation involves fragmented, multidisciplinary knowledge and high-stakes decisions that must translate into real-world preservation actions. My research explores how knowledge graph (KG)–driven AI can address these challenges by connecting discrete knowledge bases, improving accessibility and supporting structured decision-making.

In this talk, Liu shared field notes tracing two interconnected technological storylines of KG:

- the evolution of Semantic Web and Linked Data infrastructures

- the recent efforts to reduce LLM hallucination using frameworks from baseline Retrieval Augmented Generation (RAG) to graph-based RAG, discussing their development, accessibility and current use cases

Focusing on the Stacks Xchange, he drew from his implementation experience to talk about selection criteria for different graph-based RAG methods, outlining key technical steps in building a KG-driven AI framework, and sharing practical development notes using tools/frameworks, such as Neo4j or GraphRAG.

Come for the Conversation, Stay for the Pizza

In the second meeting, archaeology student Luis Rodriguez-Perez examined his past 3D Virtual Reality (VR) projects and ideas on archaeological and historic structures, workshopping how we can connect embodiment and space of the past with VR while navigating what are essentially critical tabulation.

“One can learn so much from fellow students, and one can benefit from presenting in front of an audience of peers in a setting that feels safe, supportive, and one that also provides lunch,” says Johanson.

Interpreting AI: Vibecrunching

The third presentation, Vibecrunching: Heuristic Interpretation of Information in the Age of AI, took place on April 6.

The talk, by Sophia Toubian, a Ph.D. Candidate in Information Studies, introduced “vibecrunching,” a methodological approach that combines computational analysis with interpretive methods to study how these feelings or judgments emerge as information moves across contexts.

Drawing on examples from scientific research, public-facing explanations, and generative AI outputs, the project explores how meaning shifts through processes of translation, simplification, and recombination. The presentation also reflects on ongoing collaborative work with the UCLA AI & Cultural Heritage Lab (AICHL), where these questions are examined in relation to historical narratives produced by large language models, including analyses of how AI systems generate and circulate accounts of events such as the Holocaust.

Why Do Languages Have the Sounds They Do?

On April 20, Muhammad Rehan, a third-year Ph.D. student in the Indo-European Studies Program, investigated an age-old question in phonological typology: Why are particular combinations of sounds more common than others and, more fundamentally, why do languages have the sounds they do?

The main claim of this talk is that universal perceptual similarity of contrasts predicts their typological frequency through a possible causal link of segmental stability: Sounds that are harder to perceive are less stable and thus more likely to change diachronically, explaining why they are less frequent in the languages spoken around the world today.

Together, these cases highlight how AI-generated narratives often optimize for plausibility and narrative coherence rather than evidentiary grounding, making the interpretive heuristics through which readers recognize credibility, bias, and accessibility an increasingly important site of analysis.

The final Spring Quarter meeting of Stacks Xchange is scheduled for May 18, though the programming is still evolving.

All graduate students are welcome to attend. If you would like to present your work at Spring Quarter’s Stack Xchange, in the coming year, please contact DataX.